Almost every public conversation about quantum computing eventually arrives at the same place: it will break cryptography. Bitcoin will collapse. Banks will be drained. The internet's trust layer will disintegrate.

This narrative is wrong on two fronts. The threat to cryptography is real but bounded, telegraphed for a decade, and largely already mitigated. And it is overshadowing the more important story — that quantum hardware looks built for exactly the workloads that AI is running into walls on.

Today's frontier AI systems are pattern matchers. Trained on enough data, they recognize, retrieve, and recombine. What they cannot do — at least, not without enormous and increasingly inefficient amounts of compute — is reason. Probabilistic inference. Search over hypothesis spaces. Combinatorial planning. These are different operations from next-token prediction, and they sit on different mathematical foundations. Foundations that quantum computers are unusually well-suited to operate on.

The Reasoning Ceiling

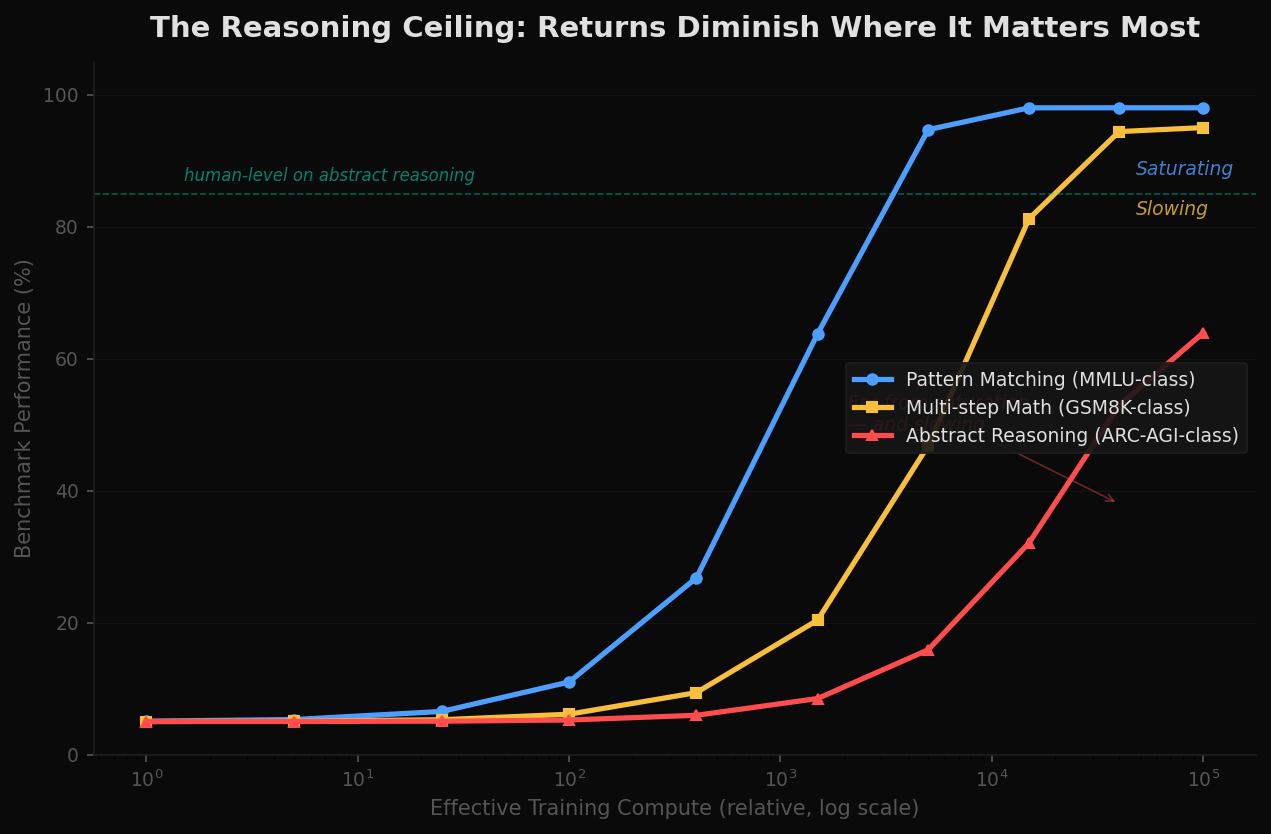

For five years the dominant story in AI has been that scale solves everything. Add more parameters, more data, more compute, and capability emerges. For pattern matching, this has held remarkably well. Knowledge benchmarks like MMLU are saturating. Frontier models score above the 95th percentile on professional exams. The pattern is recognized; the data is absorbed.

The story breaks down at reasoning. On benchmarks designed to test abstract reasoning — ARC-AGI, novel planning tasks, multi-step logical puzzles unrelated to anything in the training set — performance is far below human level and improving slowly. The same scale curves that produced exponential gains on knowledge tasks produce sublinear gains here.

The interpretation is contested. Some argue the gap closes with better data, longer chains of thought, or new architectures layered on top of transformers. Others argue something more fundamental: reasoning is not a pattern-matching problem at all. It is search through structured hypothesis space, probabilistic inference under uncertainty, and combinatorial planning over constraints. Brute-forcing those problems by adding parameters is the wrong tool — like trying to solve a maze by training a larger camera.

Whichever interpretation is correct, the empirical reality is the same. Returns on the dominant scaling axis are diminishing exactly where capability matters most.

What Quantum Actually Does Well

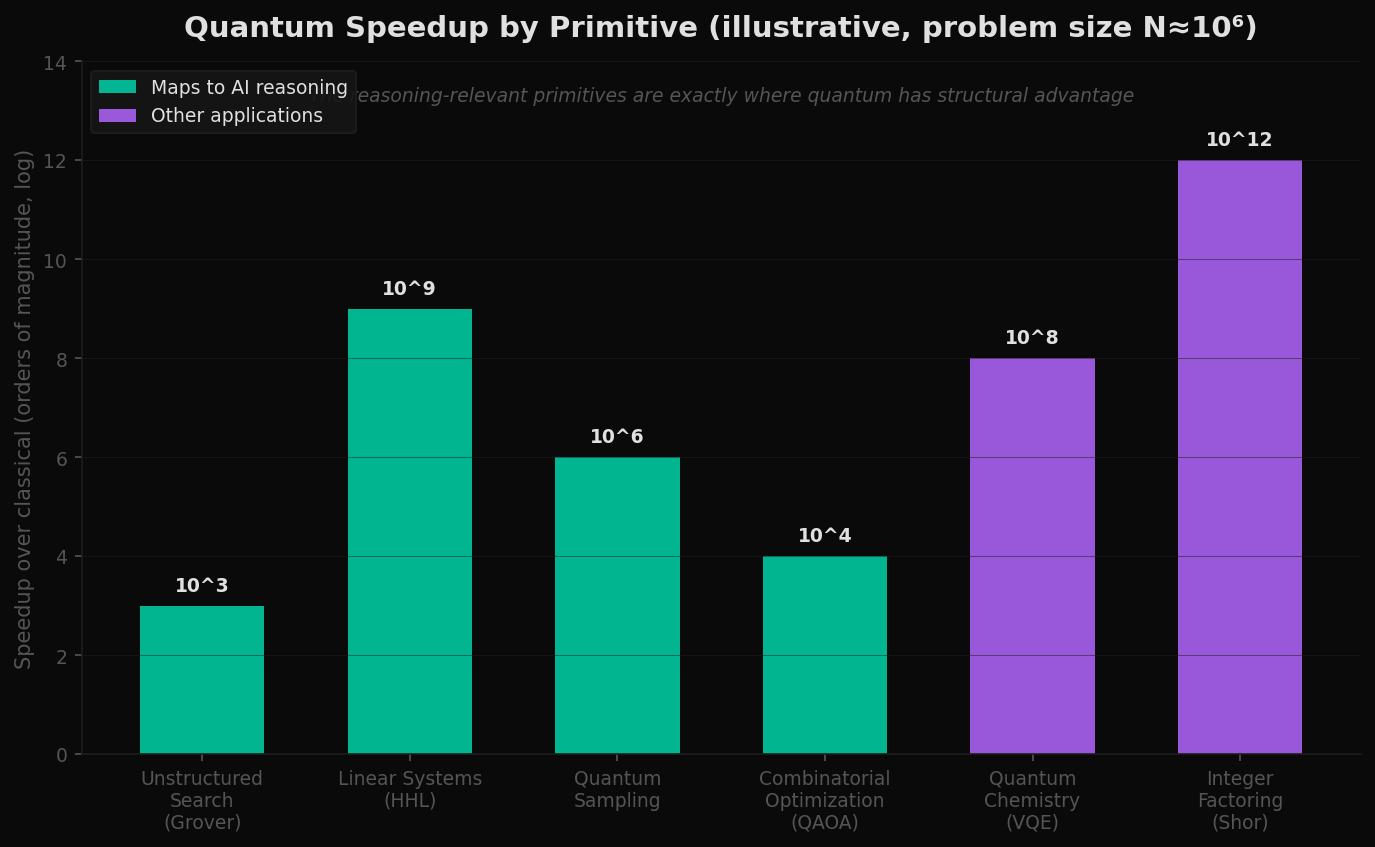

Quantum computing is often discussed as if it were a faster classical computer. It is not. It is a fundamentally different model of computation, and the speedups it offers are sharply concentrated in a small set of mathematical primitives.

For most workloads — running a web server, training a transformer, rendering a frame of video — a quantum computer offers no advantage. For a specific class of problems, the advantage is asymptotically enormous.

The primitives that matter for this argument are not the ones the press writes about. Shor's algorithm — the one that breaks RSA — is the most famous quantum algorithm in the world, but it is irrelevant to AI. The relevant primitives are quieter:

- Grover search — a quadratic speedup for unstructured search through any space of N candidates. The same operation that underpins hypothesis search in AI reasoning.

- Quantum sampling — drawing from probability distributions that are exponentially expensive to sample classically. The core of probabilistic inference and Bayesian updating.

- HHL and quantum linear systems — exponential speedup for solving linear systems under specific conditions. Relevant to kernel methods, large-scale regression, and the linear algebra that sits underneath every neural network.

- QAOA and quantum optimization — heuristic approaches to combinatorial optimization. Constraint satisfaction, planning, and scheduling.

- Amplitude estimation — quadratic speedup for Monte Carlo. Faster expected-value computation across uncertainty.

Notice what is missing from this list: training large language models. Quantum computing does not accelerate the dominant AI workload of the present decade. Matrix multiplication on dense parameter tensors is exactly the kind of structured, well-conditioned computation that classical hardware does best. Quantum's advantage is not in the workloads AI has already mastered. Its advantage is in the workloads AI is hitting walls on.

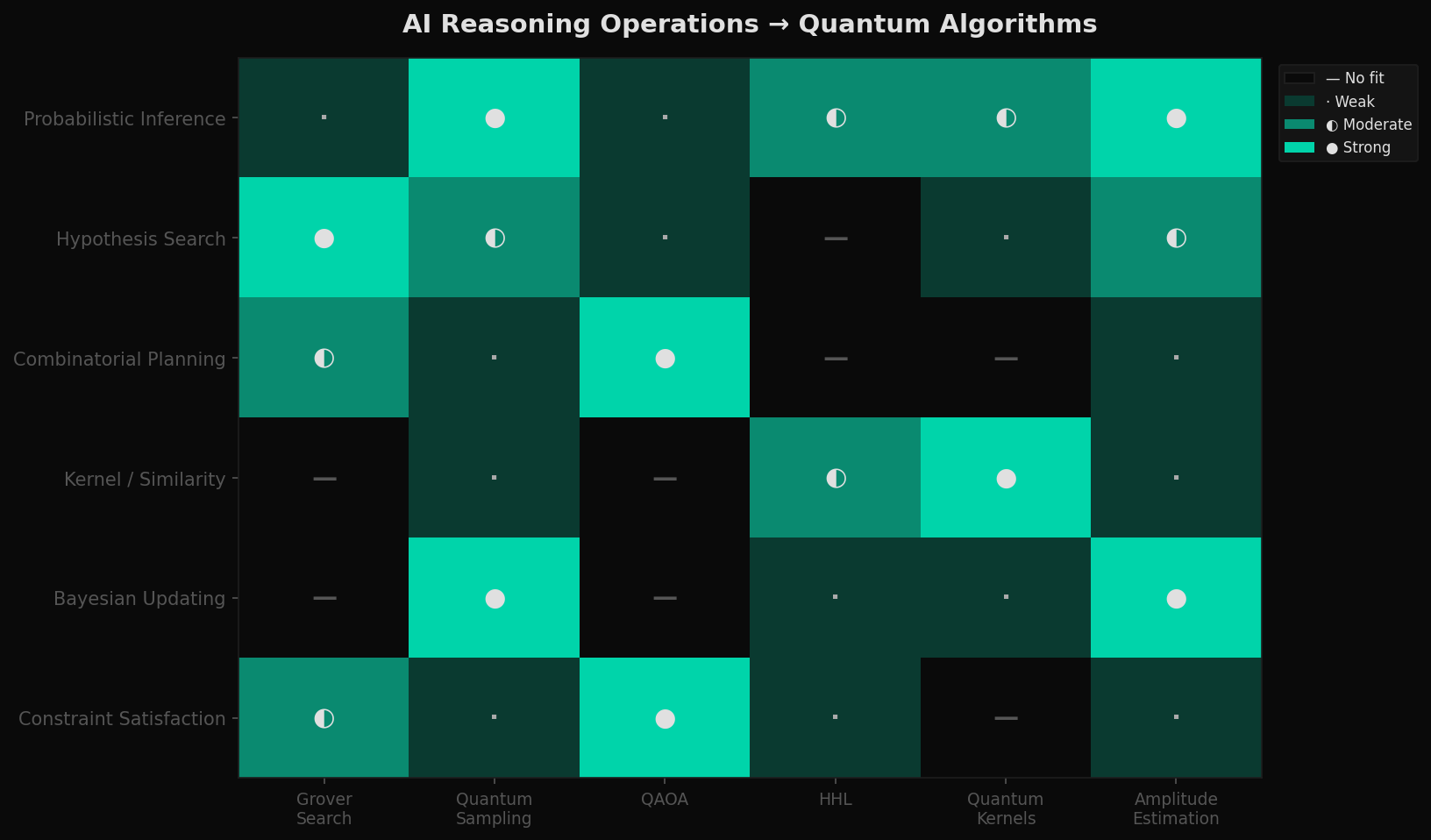

The Mapping

The argument is not that quantum hardware will speed up reasoning by some marginal factor. It is that the cognitive operations underlying reasoning have natural representations as quantum operations — in some cases more natural than their classical equivalents.

Probabilistic inference — the process of updating beliefs given evidence — is naturally quantum. Quantum states are themselves probability amplitudes; sampling from them is the native operation. Hypothesis search over a structured space of explanations is the canonical Grover problem. Combinatorial planning under constraints is what QAOA is designed for. Kernel methods that compute similarity in high-dimensional feature spaces are exactly where quantum kernels offer measurable advantage.

This is not a coincidence. The primitives quantum computers compute well are the primitives that classical computation handles poorly — and those are the primitives reasoning depends on. The architecture mismatch that makes reasoning hard for classical AI is the same mismatch that makes reasoning a natural fit for quantum AI.

The Two Curves

The argument up to here is theoretical. The hardware question is empirical. Can we actually build quantum machines large enough to do this work? On what timeline?

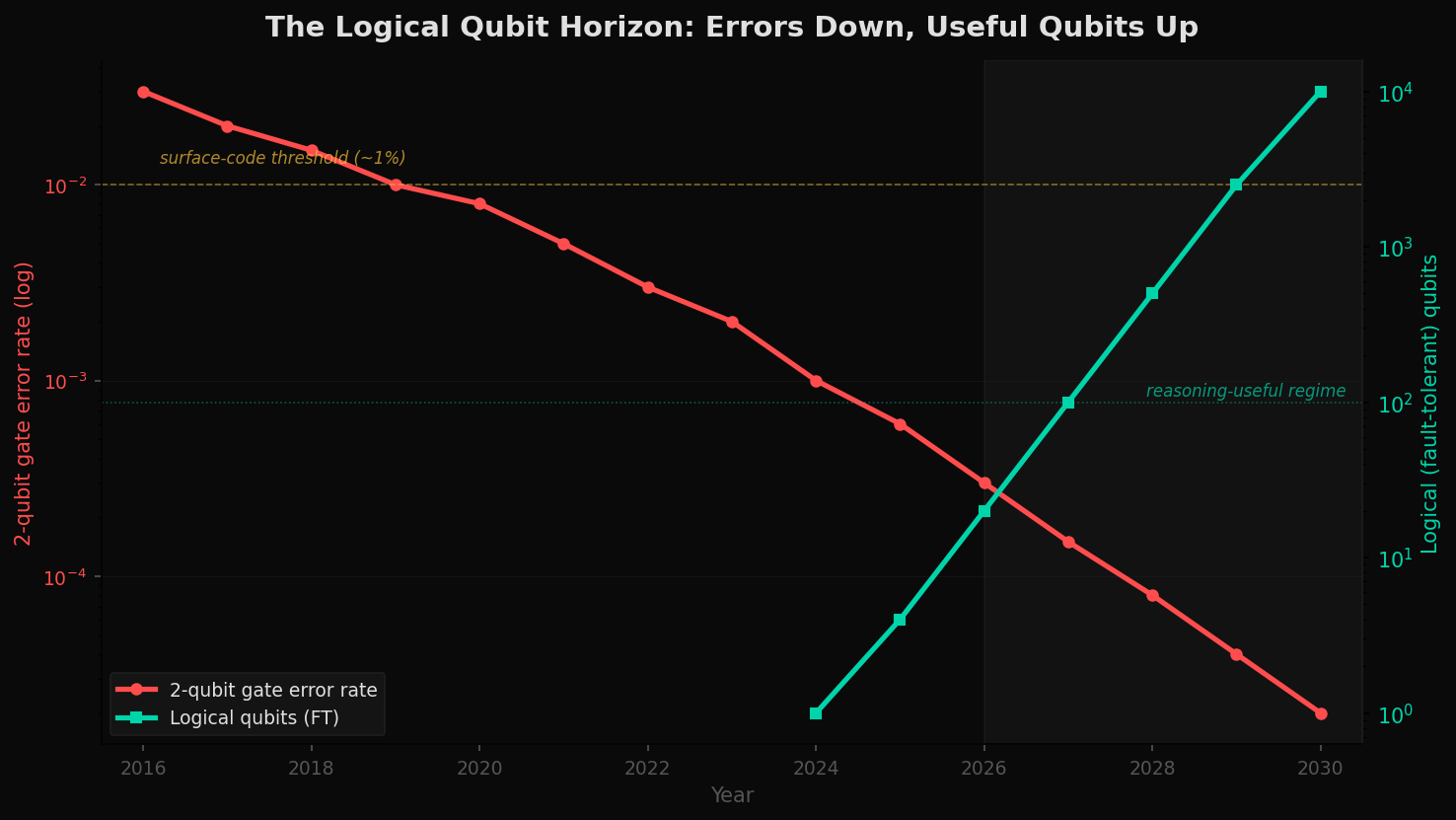

Two curves matter. The first is qubit count — how many physical qubits each vendor can produce. The second is error rate — how reliable each qubit is. Useful quantum reasoning requires both axes to converge. Many qubits with high error rates cannot run a long algorithm. Few qubits with very low error rates cannot represent a large problem.

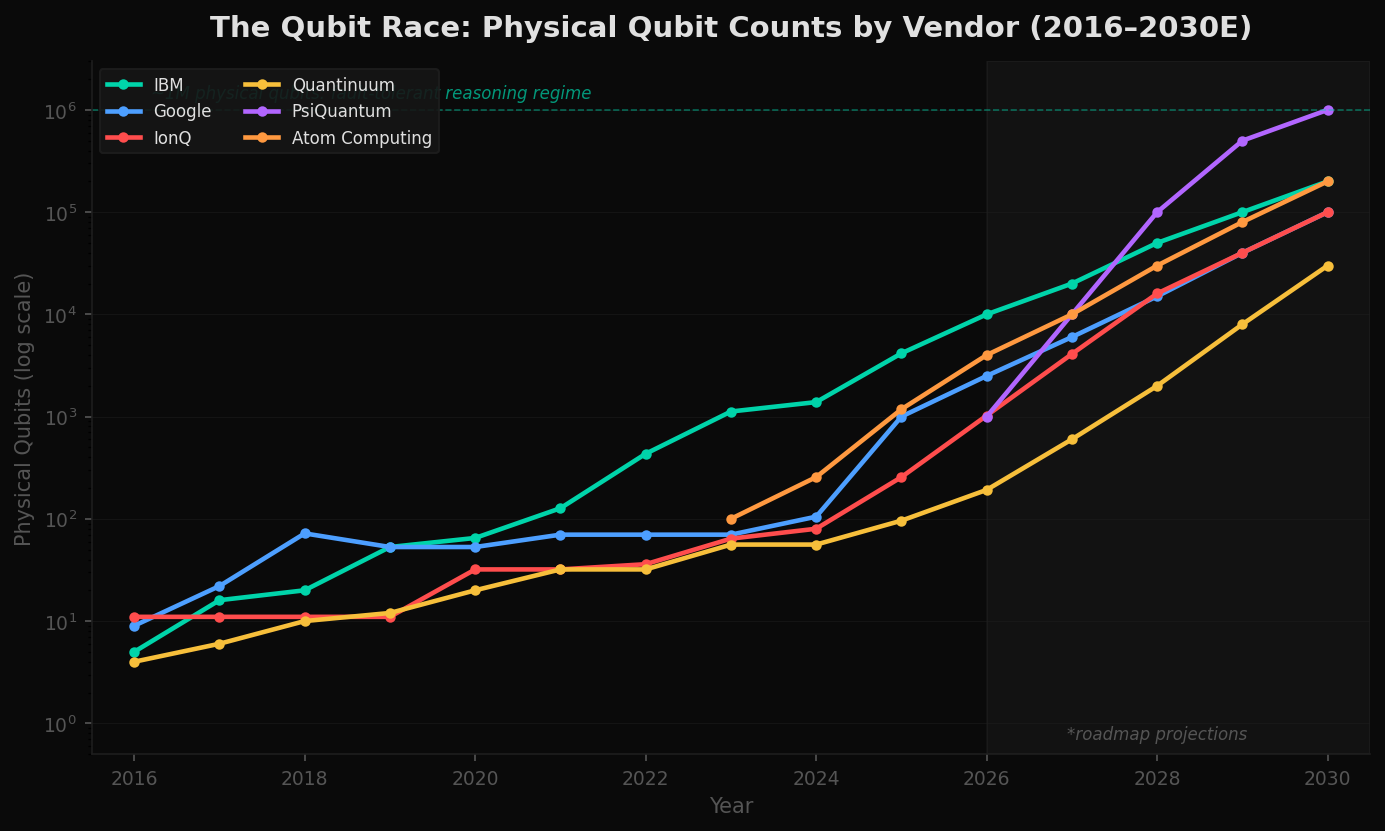

Six serious players are competing on substantially different physical substrates. IBM and Google have led on superconducting qubits. IonQ and Quantinuum have lower error rates per qubit using trapped-ion architectures, at the cost of slower gate operations. PsiQuantum is betting on photonic qubits with the explicit goal of skipping the noisy intermediate stage and going directly to fault tolerance. Atom Computing has shown remarkable scaling on neutral atoms.

The diversity matters. If any one of these architectures crosses the threshold to fault tolerance, the rest follow within a few years. The question is no longer whether useful quantum hardware will exist; it is when, and which substrate.

The crossover point — where logical qubits exist in numbers large enough to run reasoning-scale algorithms — sits in the early 2030s under conservative assumptions and the late 2020s under aggressive ones. Either way, this is not a fifty-year horizon. It is the horizon of a typical capital cycle.

The Pivot

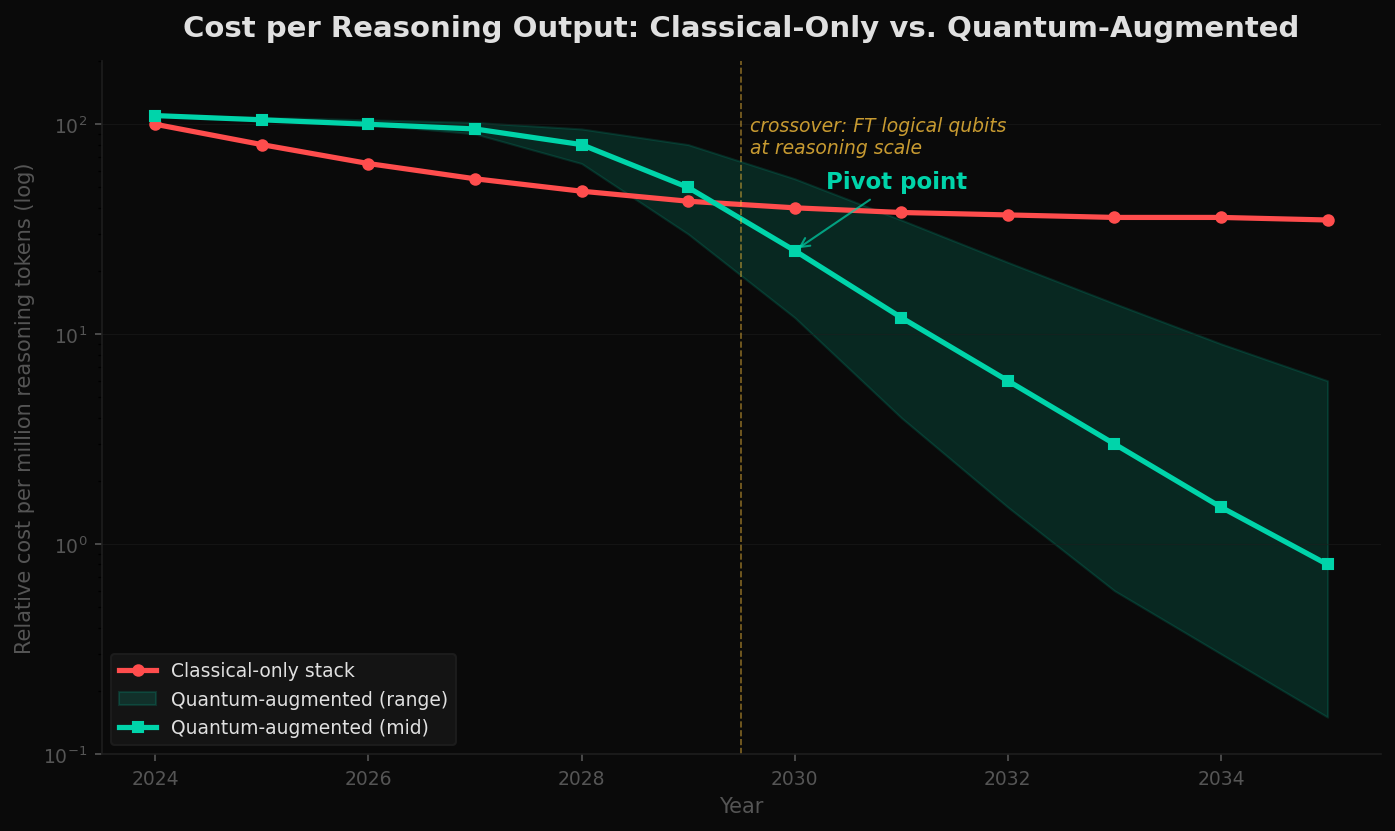

If reasoning workloads are the binding constraint on AI capability, and quantum hardware is a near-term-feasible accelerator for those workloads, then the AI compute stack rearranges itself.

The shape is a pivot, not a substitution. Pattern matching, retrieval, and language modeling stay on classical hardware where they belong. The reasoning layer of an AI system — the part that searches, plans, infers, decides — moves to quantum accelerators that handle those operations natively. The two layers communicate. The system as a whole becomes hybrid by design.

This is not a speculative architecture. Hybrid classical-quantum systems are already the norm in quantum computing research. Variational algorithms run a classical optimizer in tight loop with quantum subroutines. The hardware question is just whether the quantum side is large and reliable enough for the subroutines to be useful at the scale of frontier AI. Today it is not. By the early 2030s, on current trajectories, it likely will be.

The pivot is not just technical. It is a redirection of capital. The dominant AI infrastructure thesis of 2024–2026 — build more GPUs, build more HBM, build more data centers — is reaching diminishing returns on the workloads that matter most. The next infrastructure thesis is quieter and earlier: cryogenic dilution refrigerators, photonic quantum networks, neutral atom arrays. The companies building that infrastructure are smaller, less hyped, and operating on a different time horizon. They are also the only ones building hardware that targets the specific bottleneck.

The Crypto Non-Event

The story most people know about quantum computing is the cryptography one. Shor's algorithm factors integers in polynomial time, breaking RSA. It also breaks elliptic curve cryptography, which secures Bitcoin, Ethereum, and most of the internet's TLS traffic. A sufficiently large quantum computer — a Cryptographically Relevant Quantum Computer, or CRQC — would, in principle, drain the world's wallets and unwrap the world's secrets in an afternoon.

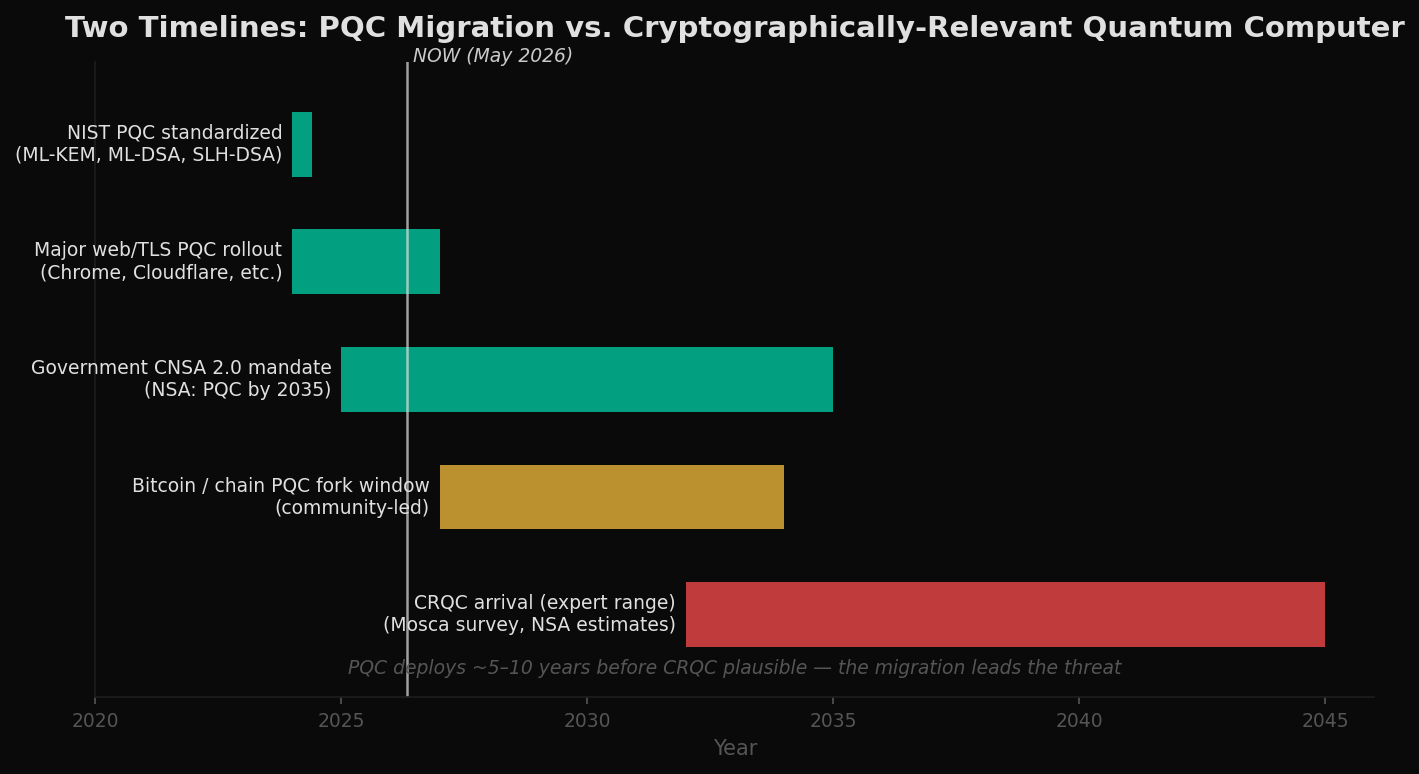

Two facts make this less catastrophic than the headlines suggest. First: the cryptography community has been preparing for this exact event since the late 1990s. Second: the migration to quantum-resistant cryptography is already underway, ahead of any plausible CRQC arrival.

The PQC standards are not theoretical. They are deployed code. As of 2026 a substantial fraction of TLS handshakes on major browsers already use hybrid PQC key exchange. Apple's iMessage uses a quantum-resistant ratchet. Signal has deployed PQC. The infrastructure that protects the internet's trust layer has been quietly hardened, and the migration is on track to be functionally complete years before any quantum machine could threaten it.

Bitcoin and other public chains are slightly different — they cannot upgrade silently. But they can hard-fork. Bitcoin has executed protocol upgrades that affected every wallet on the chain at least twice (SegWit, Taproot). The community knows how to do this. A quantum-resistant signature scheme is one fork away.

Why Crypto Survives

The mechanism that makes the crypto fork tractable is straightforward: the standards already exist, and they fit within the engineering envelope of existing systems.

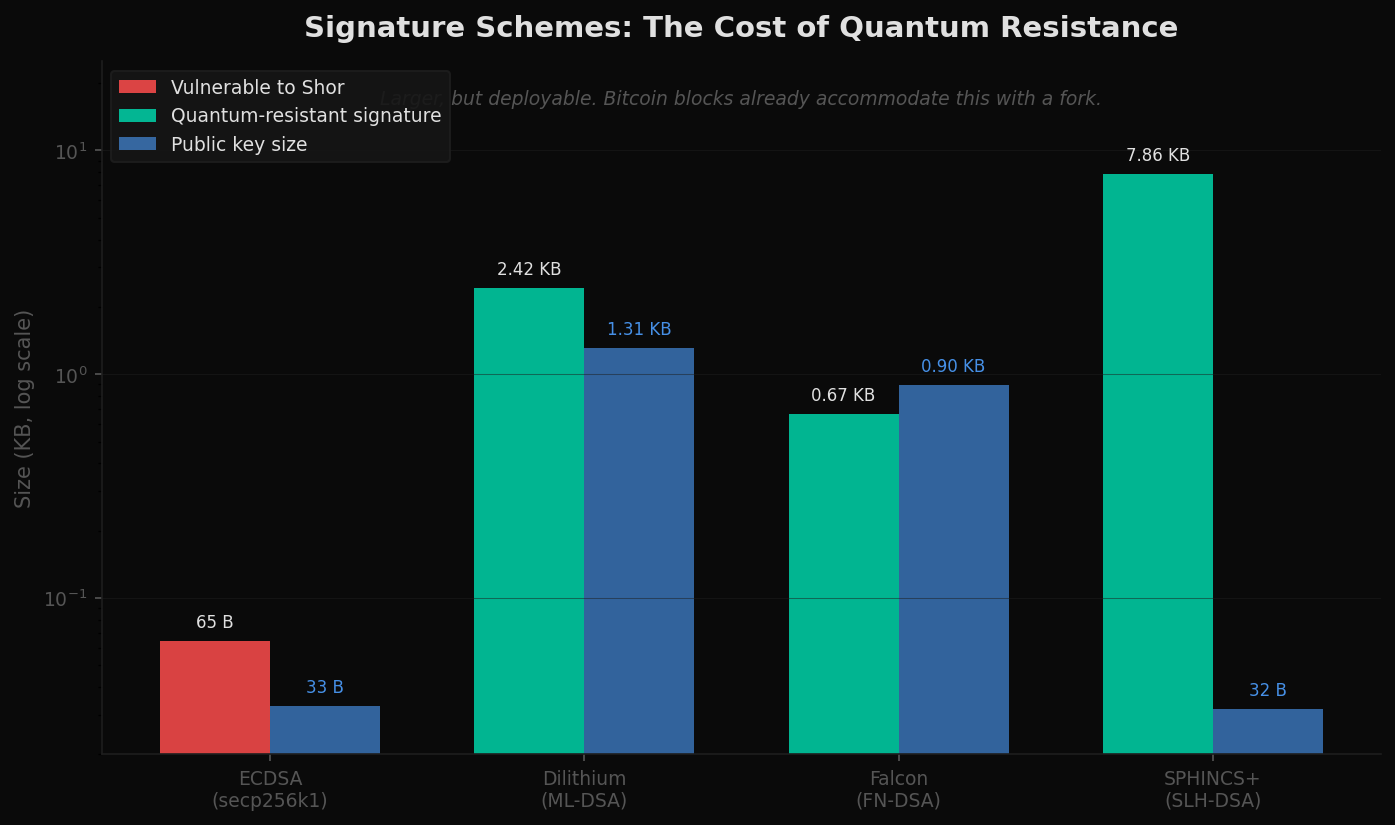

The post-quantum signature schemes are bigger. They are not impossibly big. Dilithium signatures are about 2.4 KB. Falcon is smaller at under 1 KB. Even SPHINCS+, the most conservative option in the family — based purely on hash function security, with no algebraic assumptions — produces 8 KB signatures, large but still tractable.

For Bitcoin, the storage and bandwidth implications of migrating to a PQC signature are real but manageable: blocks would get larger, transaction throughput would adjust, fees would rebalance. Critically, the migration can be incremental. Old keys can be converted to PQC-protected keys before any quantum computer exists that could threaten them. There is a window — measured in years — during which migration is voluntary and ahead of the threat. After that, it becomes mandatory. But the path is well-understood and the timing is forgiving.

The narrative that quantum will kill Bitcoin assumes a sudden surprise — a CRQC appears, every wallet drains, the chain implodes. This is not how the timeline actually unfolds. Quantum hardware capable of breaking ECDSA is years away even under aggressive forecasts. The migration is years ahead of it. The threat is real and the engineering is real. The extinction event is not.

The crypto threat is an engineering problem with known solutions. The reasoning unlock is a frontier without one. That is where the gravitational pull of quantum should redirect attention.

Implications

The current AI conversation centers on a single axis: bigger models, more compute, more memory. The current quantum conversation centers on a single threat: cryptography. Both framings miss the more interesting collision in the middle.

Reasoning is the workload classical AI is hitting walls on. It is also the workload quantum hardware is uniquely suited to accelerate. As fault-tolerant logical qubits arrive in usable numbers — late this decade by aggressive forecasts, early next decade by conservative ones — the AI compute stack will reorganize. Pattern matching stays classical. Reasoning moves quantum. The systems that integrate both layers earliest will define the next phase of capability.

Meanwhile, the cryptographic apocalypse that has been the public face of quantum computing for twenty years has been quietly engineered out of existence. The standards exist. The migrations are underway. The chains can fork. The threat is bounded, telegraphed, and largely solved.

The pivot is structural. Capital, talent, and roadmap pressure will shift toward the reasoning unlock. Crypto will adapt. Bitcoin will not die. And the most important conversation about quantum computing — the one nobody is having loudly enough — is about how machines learn to reason.

Data Sources & Methodology

Reasoning benchmark trajectories synthesized from published leaderboards and scaling-law research (GSM8K, MATH, ARC-AGI, GPQA, BIG-Bench Hard). Quantum complexity figures based on textbook results: Grover (1996), HHL (Harrow, Hassidim, Lloyd 2009), Shor (1994), QAOA (Farhi et al. 2014), and standard surveys of quantum algorithms (Montanaro 2016). Speedup figures in Figure 2 are illustrative for problem size N≈10⁶ and reflect best-case quantum advantage under favorable conditions; real-world speedups depend on problem structure, oracle access, and end-to-end I/O costs. Vendor qubit-count figures sourced from published roadmaps and press materials from IBM, Google Quantum AI, IonQ, Quantinuum, PsiQuantum, and Atom Computing; values for years beyond 2026 reflect public roadmap commitments and should be treated as targets, not guarantees. Two-qubit gate error rates from published experimental results across superconducting, trapped-ion, and neutral-atom platforms. Logical qubit counts in Figure 5 assume surface-code error correction with overhead consistent with current literature; alternative codes (e.g., LDPC) could materially shift the timeline. Cost trajectories in Figure 6 are illustrative — no firm $/token figures exist for quantum-augmented reasoning workloads — and reflect the structural shape implied by hardware roadmaps and reasoning-workload economics. PQC deployment milestones and signature scheme parameters from NIST FIPS 203/204/205 (finalized August 2024) and the OpenSSF/IETF PQC migration tracking. CRQC arrival ranges from the Mosca quantum-threat survey, NSA CNSA 2.0 guidance, and recent expert elicitations. All charts reflect data and estimates as of May 2026.

This analysis is for educational and informational purposes only. It does not constitute financial advice. Projections involve significant uncertainty, particularly across hardware roadmaps and adoption curves, and should not be used as the basis for investment decisions.